Congratulations! VIPL's paper on ranking metric optimization “Algorithm-Dependent Generalization of AUPRC Optimization: Theory and Algorithm” (Authors: Peisong Wen, Qianqian Xu, Zhiyong Yang, Yuan He, Qingming Huang) was accepted by IEEE TPAMI. IEEE PAMI, i.e., IEEE Transactions on Pattern Analysis and Machine Intelligence is a CCF-ranked-A top-tier Artificial Intelligence journal with a high IF score of 23.6, announced in 2023.

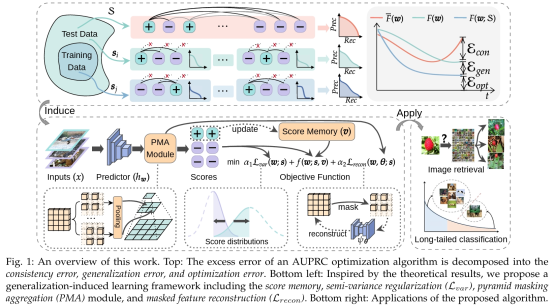

Area Under the Precision-Recall Curve (AUPRC) is a widely used metric in the machine learning community, especially in long-tailed classification and learning to rank. Although AUPRC optimization has been applied in various scenarios, the algorithm-dependent generalization performance is still an open problem. First, the standard framework to analyze the algorithm-dependent generalization, i.e., algorithm stability analysis, is infeasible for AUPRC since it involves a non-decomposable listwise loss. Second, as a compositional optimization problem, our algorithm involves a multi-variable recursion, making the stability overcomplicated. To address the first challenge, we extend instance-wise algorithm stability to listwise algorithm stability. To simplify the computation of stability, we consider state transition matrices of the model stability and intermediate variables, and simplify the calculations of the final stability with matrix spectrum. On top of this, by combining the analysis of the consistency error and the optimization error, we provide a decomposition and joint analysis of the excess error of AUPRC optimization algorithms, It shows the role of score variances, batch size, and sample diversity in the error trade-off, motivating us to boost the generalization via the semi-variance regularization and the pyramid masking aggregation. Last but not least, empirical studies on image retrieval and long-tailed classification tasks further validate the effectiveness and soundness of the proposed framework.

Download: