Congratulations! VIPL's paper on surgical image segmentation, "Distillation-SAM: Knowledge Distillation Based Auto-prompt Embedding Learning for Surgical Image Segmentation" (authors: Tang Jiyang, Han Hu, Shan Shiguang, and Chen Xilin), was accepted by IEEE Transactions on Medical Imaging (TMI). TMI is a top-tier international journal in the fields of medical image processing and analysis, with an impact factor of 9.8 in 2025.

Robot-assisted surgery (RAS) is advancing from Level 1, characterized by purely manual control, toward Level 2, which features a collaborative synergy between surgeons and robotic systems. In this transition toward partial autonomy, surgical image segmentation is critical for intraoperative navigation and postoperative assessment.While supervised deep learning has advanced this field, traditional models often exhibit limited generalizability when encountering domain shifts or high anatomical variability. To overcome these constraints, researchers have increasingly leveraged the Segment Anything Model (SAM) for its robust zero-shot capabilities. However, effectively adapting SAM to the specialized surgical domain faces three primary challenges: (1) Manual Prompt Dependency: SAM’s accuracy relies heavily on high-quality manual prompts (e.g., points, boxes), which fails to meet the requirements for automatic surgical image segmentation. (2) Lack of Spatial Awareness: Existing auto-prompt methods primarily learn global category-centric features, failing to perceive image-specific object positions. (3) Weak Supervision Alignment: Traditional approaches depend on indirect supervision via final segmentation loss, resulting in auto-prompt embeddings that struggle to reach the efficacy of human-provided inputs.

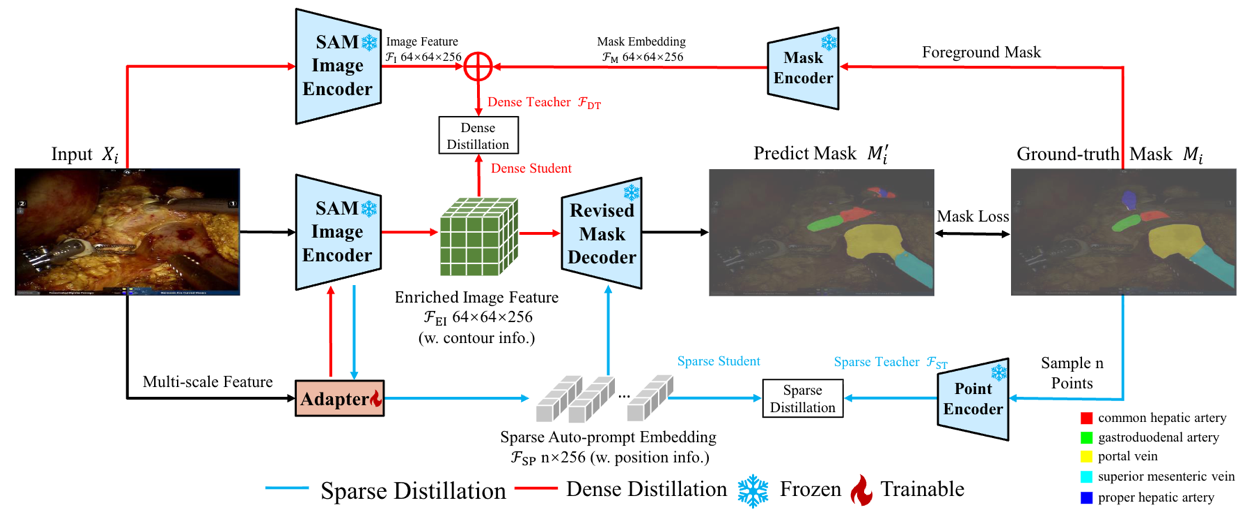

To address these issues, we propose Distillation-SAM, an approach designed to achieve high-precision multi-class semantic segmentation for surgical images while keeping the SAM encoder and decoder entirely frozen: (1) Lightweight Adapter Branch: We introduce a PVT-tiny-based adapter that interacts with the SAM image encoder via cross-attention to extract multi-scale, spatially-aware prompt embeddings from the input image. (2) Multi-Dimensional Prompt Distillation: We shift knowledge distillation (KD) from the output level to the embedding level. By using "teacher embeddings" derived from ground-truth masks as direct constraints, we align the adapter-generated sparse prompts (simulating point/box prompt information) and dense prompts (simulating contour cues) with SAM's native prompt space. (3) Refined Multi-Class Semantic Head: We incorporate a minimal MLP branch at the end of the mask decoder to predict category scores, effectively extending SAM’s binary segmentation capabilities to multi-class semantic segmentation.

Experiments demonstrate that Distillation-SAM requires training only 13.8M additional parameters (about 10% of the SAM backbone). This approach preserves SAM's strong pre-trained generalization priors while effectively preventing overfitting on small-scale medical datasets. On three representative surgical image segmentation benchmarks: IVIS (https://github.com/HanHuCAS/SurgNet), EndoVis2017, and Cholecseg8k, our method outperforms existing SAM-based auto-prompt learning methods (e.g., AdaptiveSAM, SurgicalSAM), achieving the SOTA accuracy across vessel, instrument, and tissue segmentation tasks.

The code for this work will be available on GitHub: https://github.com/tjy828/DistillSAM

Figure 1. Framework of Distillation-SAM. Distillation-SAM generates auto-prompt embeddings through a lightweight adapter and aligns with teacher embeddings using a knowledge distillation mechanism, thereby achieving automatic surgical image segmentation based on SAM without human prompting.

Download: