1. Overview

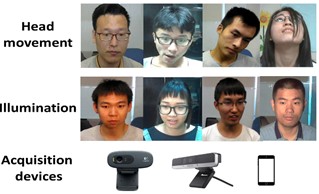

VIPL-HRdatabase is a database for remote heart rate (HR) estimation from face videos underless-constrained situations. It contains 2,378 visible light videos (VIS) and752 near-infrared (NIR) videos of 107 subjects. Nine different conditions,including various head movements and illumination conditions are taken intoconsideration. All the videos are recorded using Logitech C310,RealSense F200 andthe front camera of HUAWEI P9 smartphone,and the ground-truth HR is recordedusing a CONTEC CMS60C BVP sensor (a FDA approved device). More detailedinformation about the database can be found in the Readme file in the package.

2. Evaluation Protocol

Forthe methods that do not need training,we suggest all the videos should be usedfor testing. For machine learning methods,a five-fold subject-exclusivetesting protocol is suggested. The detailed partitionof the subjects is also in the package. The average HR is suggested as theground-truth HR for each video.

3.Contact

Hu Han(hanhu[at]ict.ac.cn),Institute of Computing Technology,Chinese Academy of Sciences

Yunchi Zhang(zhangyunchi19[at]mails.ucas.ac.cn),Institute of Computing Technology,Chinese Academy of Sciences

4.Download

The VIPL-HR dataset is released to universities and researchinstitutes for research purpose only. To request a copy of the VIPL-HR database,please do as follows:

• Download the VIPL-HR Database Release Agreement, read it carefully,and complete it appropriately. Note that the agreement should be signed by a full-time staffmember(that is,student is not acceptable). Then,please scan the signed agreement and send it to Dr. Han (hanhu[at]ict.ac.cn) using an official email address (that is,university or institute email address,and non-official email addresses such as Gmail and 163 are not acceptable). When we receive your reply,we wouldprovide the download link to you.

• By using the VIPL-HR database,you are recommended to citethe following paper:

[1] Xuesong Niu,Shiguang Shan*,Hu Han,and Xilin Chen,"RhythmNet: End-to-end Heart Rate Estimation from Face via Spatial-temporal Representation,"IEEE Transactions on Image Processing (T-IP),vol. 29,pp. 2409-2423,2020.

[2] Xuesong Niu,Hu Han,Shiguang Shan,and Xilin Chen,“VIPL-HR: A Multi-modal Database forPulse Estimation from Less-constrained Face Video,” Asian Conference on ComputerVision,2018.

附件下载: